Samsung AI Forum Offers a Roadmap for the Future of AI

on September 18, 2018

It wasn’t that long ago that the idea of building technologies with ‘brains’ that learn and are even structured just like ours seemed like science fiction.

Just ask the distinguished speakers at the “Samsung AI Forum 2018”. Held in Seoul from September 12th to 13th, the second edition of Samsung Electronics’ artificial intelligence (AI) forum featured accomplished AI experts, who discussed how groundbreaking advancements are not only helping to create technology that will make our lives more comfortable, convenient and efficient. They’re also teaching us more about how our own minds work.

Unsupervised Learning Takes Center Stage

Attendees of the Samsung AI Forum 2018 are listening intently to the opening address of Kinam Kim, Samsung Electronics’ President and CEO

The forum began with a presentation from the founding director of the New York University Center for Data Science, and one of the world’s leading minds in the field of deep learning, Yann LeCun.

LeCun’s speech set the stage for the exciting discussions on unsupervised learning that would follow over the course of the two-day event. LeCun explained why he and many of his peers believe that unsupervised learning, also known as self-supervised learning, represents the future of AI. He also delved into unsupervised learning algorithms’ potential applications (and limitations), and explained how they differ from supervised and reinforcement learning algorithms.

As LeCun explained, supervised learning algorithms learn utilizing labeled datasets and answer keys that allow them to evaluate their accuracy. This essentially means that each example in the training dataset includes the answer that the algorithm should produce. With reinforcement learning, an algorithm is trained using a reward system that offers feedback when it performs an optimal action for a given situation. It relies on this feedback, rather than labeled datasets, to make the choice that offers the greatest reward.

With unsupervised learning, the algorithm is tasked with making sense of an unlabeled dataset—a set of examples that doesn’t have a correct answer or desired outcome—on its own. While these algorithms can be more unpredictable than their counterparts, they can also perform more complex processing tasks.

LeCun used training self-driving cars as a key example of unsupervised learning’s potential. “A lot of people who are working on autonomous driving are hoping to use reinforcement learning to get cars to learn to drive by themselves by trial and error,” said LeCun. “The problem with this is that, because of [reinforcement learning’s inherent inefficiencies], you’d have to get a car to drive off a cliff several thousand times before it figures out how not to do that.”

LeCun explained how, unlike reinforcement learning models, which rely on trial and error, unsupervised learning models could potentially be capable of guessing what to do in a situation like this—demonstrating mental capabilities similar to what we’d call common sense.

He also discussed his experience developing artificial neural networks—specifically convolutional neural networks (ConvNets)—and demonstrated how they can be used to build not only self-driving cars but a wide variety of innovative devices, including technologies for medical signal and image analysis, bioinformatics, speech recognition, language translation, image restoration, robotics and physics.

LeCun’s presentation was followed by a lecture from another leading light in the field of deep learning: University of Montreal professor Yoshua Bengio. Professor Bengio’s lecture focused specifically on stochastic gradient descent (SGD)—an AI optimization method that’s used to minimize errors made by artificial neural networks.

As Bengio explained, “[SGD] is really the workhorse of deep learning. This is the optimization technique that is used everywhere for supervised learning, reinforcement learning and self-supervised learning. It’s been with us for many decades and it works incredibly well, but we don’t completely understand it yet.”

Bengio’s presentation allowed attendees to gain a better understanding of SGD, with specific focus on how SGD variants can affect neural network optimization and generalization. Bengio discussed how the traditional view of machine learning sees optimization and generalization as neatly separated, but that’s not actually the case. He also presented detailed research findings on the effects of SGD-based learning techniques on both aspects of network design.

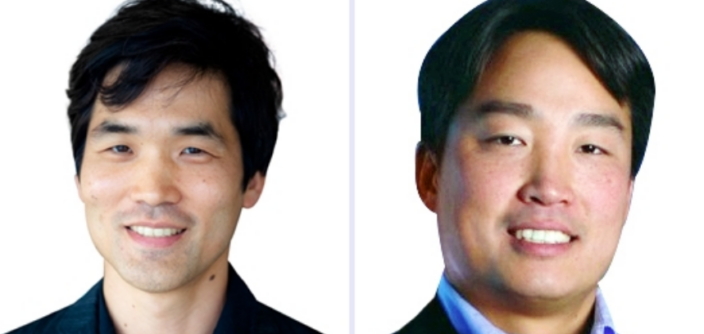

(from the top, clockwise) NYU professor Yann LeCun, University of Montreal professor Yoshua Bengio, MIT Media Lab’s professor Cynthia Breazeal and Samsung Research’s Executive Vice President Sebastian Seung.

Could Unsupervised Learning Unlock the Secrets of the Brain?

Sebastian Seung, Executive Vice President of Samsung Research and Chief Research Scientist of Samsung Electronics, delivered a particularly illuminating presentation that outlined why unsupervised learning will be essential for developing AI with human-level mental capabilities.

Seung described how the convolutional neural networks that LeCun had examined in detail are in fact based on insights gained through the study of neuroscience. He also discussed how his research in both artificial and biological neural networks led him to study ways to apply AI to gain a better understanding of how our brains are wired.

Seung stressed that the model for designing unsupervised learning networks lies in the cortex of the brain, and highlighted a recent study that his team was involved in that used AI to map out all of the neurons contained in a one cubic millimeter of a mouse’s visual cortex—more than 100,000 in total.

The unsupervised learning algorithm that the researchers utilized allowed them to not only create a 3D reconstruction of the neural network’s wiring, but also made it possible to label and color in individual cells and their components. “That’s the magic of deep learning,” said Seung. “If a human had to color all that in, it would take about 100 years of work. And that’s with no coffee breaks or sleeping.”

Living with Social Robots in ‘10 to 20 Years’

The speech delivered by Cynthia Breazeal, the founder and Chief Scientist of Jibo, Inc., and the founding director of the Personal Robotics Group at MIT’s (the Massachusetts Institute of Technology’s) Media Lab, shifted focus to applying AI to develop advanced robotics.

Breazeal’s speech, entitled “Living and Flourishing with Social Robots,” discussed approaches needed to develop autonomous systems that utilize AI to enhance our quality of life. As Breazeal explained, autonomous, socially and emotionally intelligent technologies—robots with what’s known as ‘relational AI’—present a wide range of exciting benefits.

“I’m really excited to think about the next 10 to 20 years—of having these robots actually become a part of our daily lives,” said Breazeal.

The fascinating presentation highlighted helpful companion technologies in particular, and included specific examples of ways that robots could be used to assist children and older adults. Breazeal noted studies in which AI robotic companions were given to patients at a children’s hospital, as well as kindergarten-age students and senior citizens.

Videos of the studies showed how the children in the hospital drew comfort from having a peer-like companion by their side, and demonstrated how robots can be used to boost learning. As Breazeal explained, “This is about a different vision for AI. There’s so much emphasis right now on tools for professionals, and there’s not a lot of deep thinking around how AI is going to benefit everyone.” The studies, Breazeal added, “show that there’s a lot of promise with these technologies in the real world… making a real difference.”

This year’s forum also included a diverse array of speeches that offered an all-encompassing look at the state of artificial intelligence development today. These included presentations on topics covering advancements in reinforcement learning, mutual information neural estimation, socially and emotionally intelligent AI, personal assistant robots, and precision medicine via machine learning. The developments discussed at the Samsung AI Forum 2018 represent great strides toward creating an AI-connected future.